In another example, if Lens recognizes a barcode or text in an image (for example, a product name or a book title), Lens may return a Google Search results page for the object. Lens may also rely on available signals, like the product’s user ratings, to return such results. For example, if an image contains a specific product - like jeans or sneakers - Lens may return results providing more information about that product, or shopping results for the product. In other cases, when Lens is confident it understands which object in the picture you’re interested in, Lens will return Search results related to the object. In this case, Lens might only show the result for a German shepherd, which Lens has judged to be most visually similar.

Let’s say that Lens is looking at a dog that it identifies as probably 95% German shepherd and 5% corgi. Lens may sometimes narrow these possibilities to a single result. When analyzing an image, Lens often generates several possible results and ranks the probable relevance of each result. Lens may also use other helpful signals, such as words, language, and other metadata on the image’s host site, to determine ranking and relevance. Lens also uses its understanding of objects in your picture to find other relevant results from the web. Lens compares objects in your picture to other images, and ranks those images based on their similarity and relevance to the objects in the original picture.

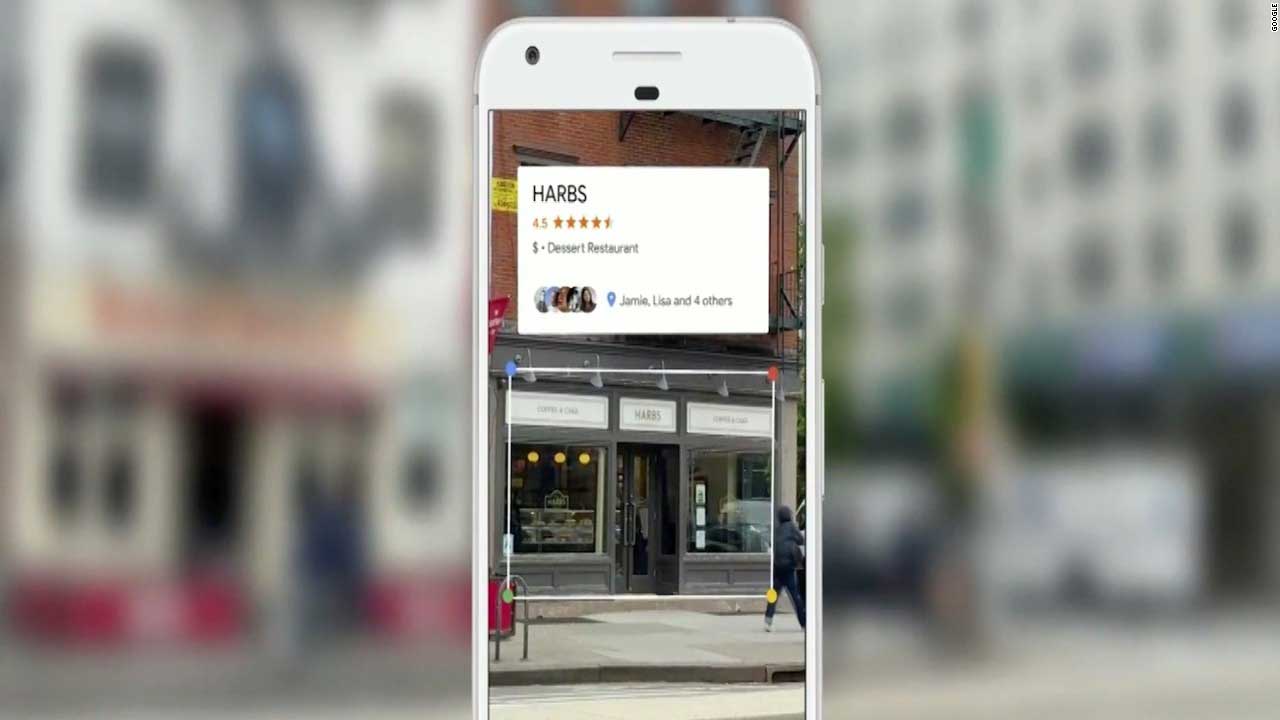

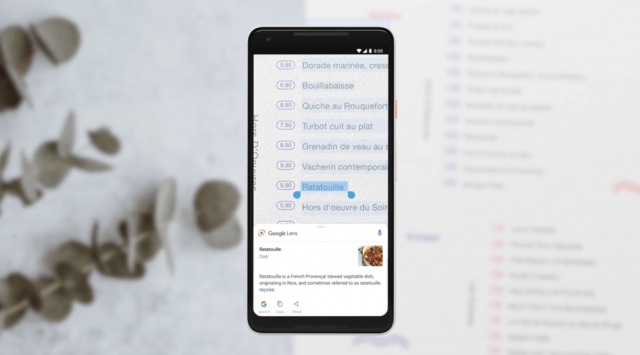

Using a photo, your camera or almost any image, Lens helps you discover visually similar images and related content, gathering results from all over the internet. Google Lens lets you search what you see. Similar to an image search action, you can refine the result by adding variations such as color to a car or nearby places where to buy the item searched.Google Lens is a set of vision-based computing capabilities that can understand what you’re looking at and use that information to copy or translate text, identify plants and animals, explore locales or menus, discover products, find visually similar images, and take other useful actions. Google releases out multi-search for Lens and SearchĪt the same time, Google announced that multi-search on Lens and Search is now available globally. But on desktops, Chrome already has an image search function where you can directly right-click through a photo to choose Search image with Google. Since Google is still rolling out the search via Assistant, users can opt to capture a photo or screenshot and go through Lens or Google Photos to do an image search. In addition, you will need to install both Lens and Assistant to use the upcoming image search feature.Įnable on-screen image search in apps and pages via Assistant / © Google Edit by NextPit Alternatively, you can trigger Assistant by opening the Google keyboard and tapping the microphone icon. While the demo was in the Messages app, Google says that the on-screen image search will be supported on other apps and web pages.įor most non-Pixel Android phones and on Apple's iPhones, activating Assistant requires pressing the home button. How on-screen image search is done via AssistantĪs shown by Google in its clip, a user can search for an image or video being displayed on the screen by summoning Assistant and tapping 'Search screen' from the pop-up menu. Don't miss: How to activate protected Incognito browsing on Android and iPhone.

Google is updating the Lens in the coming months, so it could add a new ability of on-screen image search with the help of Google Assistant. The Lens app on mobile devices already allows image search, but you will need an extra step of saving a photo or screenshot before you can perform an on-screen search.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed